The Zero Human Company Already Exists. The Question Is Who Governs It.

The Zero Human Company Already Exists. The Question Is Who Governs It.

The zero human company already exists.

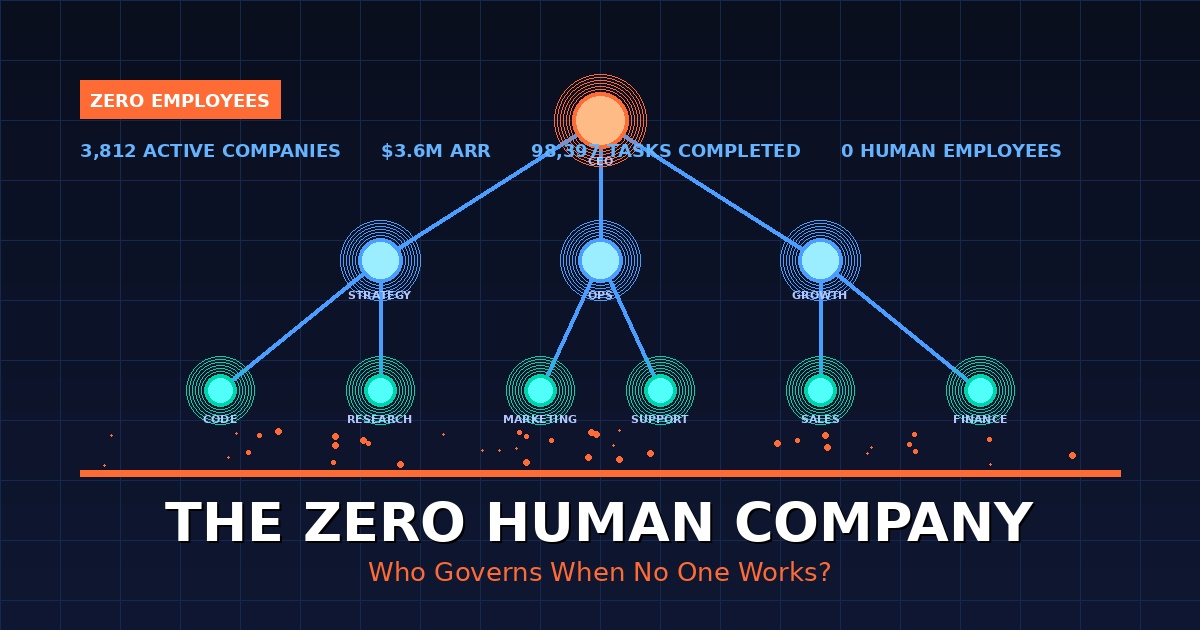

By March 2026, a platform called Polsia reported 3,812 active autonomous companies generating $3.6M in annual run rate, all operated by AI agents without a single operational employee. FelixCraft, another autonomous platform, pulled $78,000 in revenue in 30 days from an AI-run guidebook product. These are not prototypes living in sandbox environments. They are live businesses writing code, sending customer emails, running ad campaigns, and processing payments with no human in the loop between the decision and the action. Every conversation about AI and business has been pointed at the wrong problem. The real disruption is not labor displacement. It is accountability dissolution.

The zero human company (ZHC) has arrived, and engineering leaders who treat it as a distant future risk are already behind.

What a Zero Human Company Actually Is

Most definitions conflate automation with autonomy. They are not the same thing.

A company that uses AI tools still has humans making decisions. A company that automates workflows still has humans reviewing outputs. A zero human company has neither. No employee sits between an agent's decision and the action that follows it. The agent assesses market conditions, decides to launch a new product, builds it, ships it, markets it, and iterates based on results. A human founder may set initial capital or broad direction. After that, the agents work.

The critical technical distinction is this:

- Autonomous company: AI operates the company but humans retain specific roles.

- Zero human company: No human performs any operational function. Think of it as the difference between a self-driving car with a safety driver and one with an empty seat.

- The default is action: In a ZHC, agents don't await approval. They act. Humans can intervene, but intervention is the exception, not the flow.

Paperclip, the open-source orchestration framework built on Anthropic's Claude Code, models this as an org chart rather than a workflow. Agents hire other agents. A CEO agent sets goals. Manager agents assign tasks. Worker agents execute. Results travel back up the chain. The structure is recursive. A manager can fire an underperforming agent and hire a replacement mid-operation.

The Architecture That Makes This Possible

Four converging capabilities enabled the ZHC in 2025 and 2026. Remove any one of them and the model collapses.

- Long-context reasoning: Modern frontier models maintain coherent plans across multi-step operations that would have caused drift or hallucination in earlier models.

- Reliable tool use: Agents can call APIs, deploy code, send emails, and process payments with consistent accuracy. Tool calls no longer require babysitting.

- Multi-agent orchestration: Frameworks like Paperclip (31,000 GitHub stars as of early 2026) provide the management layer that turns parallel agents into a coordinated workforce with org charts, budgets, and audit trails.

- Extended autonomy architecture: Anthropic designed Claude Code specifically for multi-step task sequences that require no human prompting between steps.

KPMG and the University of Amsterdam discovered a key architectural lesson through their zero-person company experiment: assigning agents to executive roles caused drift and hallucination. The working model is an army of disposable agents each assigned to small, scoped tasks within larger processes. The granularity of the task determines the reliability of the output. Scope creep kills autonomous companies the same way it kills human ones.

The Numbers That Changed the Conversation

Skeptics can no longer lean on "not yet." The revenue is real.

- Polsia: 3,812 active autonomous companies. $3.6M annual run rate. $50/month per company plus 20% revenue share. Active companies grew 212% week-over-week in early March 2026.

- FelixCraft: $78,000 in 30 days from an AI-operated guidebook product.

- KellyClaudeAI: An autonomous agent built by Gauntlet AI founder Austen Allred that builds and ships iOS apps with no human involvement and reports early revenue.

- Infrastructure investment: Goldman Sachs projected AI infrastructure spending at $200 billion in 2025. Nvidia's Jensen Huang estimates data center investment near $1 trillion by 2028.

The economics of ZHCs are not aspirational. They are operational. And they compress the startup economics conversation into a single uncomfortable fact: a solo founder with a $50/month subscription can now operate thousands of businesses simultaneously. The cost structure of traditional company-building has broken.

The Governance Vacuum Nobody Is Designing For

Here is what the discourse keeps circling without landing: the ZHC does not eliminate the need for human judgment. It concentrates all human responsibility into a single layer that almost no organization has designed.

When an AI agent sends a legally questionable email, who is liable? When an autonomous company makes a hiring decision about another AI agent that results in a privacy breach, what regulation applies? When an agent-operated business runs an ad campaign that violates platform terms, who is accountable? The EU AI Act proposes risk-tier classifications. The NIST AI Risk Management Framework outlines responsible deployment practices. Neither framework was built for entities where no human performs operational work.

Engineering leaders face a more immediate version of this problem:

- Trust architecture becomes the product: In a ZHC, the governance layer is not a compliance checkbox. It is what determines whether the business can scale without catastrophic failure.

- Audit trails replace management: Traditional management exists to catch and correct errors before they compound. In a ZHC, audit infrastructure does that work. Most current frameworks provide it. Most organizations do not know how to read it.

- Graduated autonomy is not optional: The KPMG research found that agents given broad roles drift. Narrow task scoping, with humans reviewing at critical decision gates, is what produces consistent output at scale.

The demand problem compounds the governance problem. The bottleneck in zero human business is no longer execution. Thousands of autonomous companies can be spun up in hours. The constraint is demand. Who buys from a thousand AI-generated businesses? The ZHC solves for supply. It creates a demand crisis that governance, brand trust, and human oversight may be the only structures capable of addressing.

What This Means for Engineering and Product Leaders

The ZHC model will not stay confined to solo founders and experimental platforms. Enterprise adoption is already in motion. McKinsey reports that nearly eight in ten companies use generative AI but just as many report no significant bottom-line impact. The gap between adoption and value is exactly where agentic architectures operate.

The shift for technical leaders is not "should we build this." It is "what breaks when we do."

- Observability becomes the engineering priority: You cannot debug an autonomous company the way you debug a codebase. The telemetry layer must capture agent decisions, not just agent outputs.

- Scope contracts replace job descriptions: Every agent must have a defined boundary. Not a role. Not a title. A scope. What it can decide. What requires escalation. What triggers a stop.

- Failure modes cascade differently: In a human organization, a bad decision propagates slowly. In a ZHC, a misaligned agent can execute thousands of actions before a human notices. Containment architecture matters more than correction mechanisms.

- The shift from doer to director: The engineering org does not disappear in a ZHC model. It moves up the stack. Engineers become the designers of systems that make decisions, not the people making them.

Gartner identified agentic AI as the top strategic technology trend for 2025. Deloitte warned that while agentic AI will become mainstream, risks include strategic misalignment, cyber exposure, and regulatory blind spots. Both assessments treat the risks as edge conditions. They are not. They are the primary engineering problem of the ZHC era.

The New Org Chart Has One Human Layer

The zero human company is not the end of human work in business. It is a compression of human work into a single, high-leverage, high-stakes layer that most organizations have never had to build.

That layer is governance. Not compliance. Not oversight as an afterthought. The intentional design of what agents can decide, what they cannot, how their decisions are logged, how failures are contained, and how humans re-enter the loop when autonomous judgment fails. The companies that treat this layer as an engineering problem will outcompete the companies that treat it as a legal problem. The zero human company does not need fewer humans. It needs the right ones in the right position in the architecture, which is the position of trust.