Most Multi-Agent Systems Are One Agent in a Costume

Most Multi-Agent Systems Are One Agent in a Costume

Multi-agent is the most over-engineered word in AI right now.

Teams stand up five Claude calls in a trench coat, label them planner, researcher, writer, critic, and editor, and call it an architecture. The five calls share the same model, the same prompt scaffold, and the same blind spots. The output is worse than one good prompt.

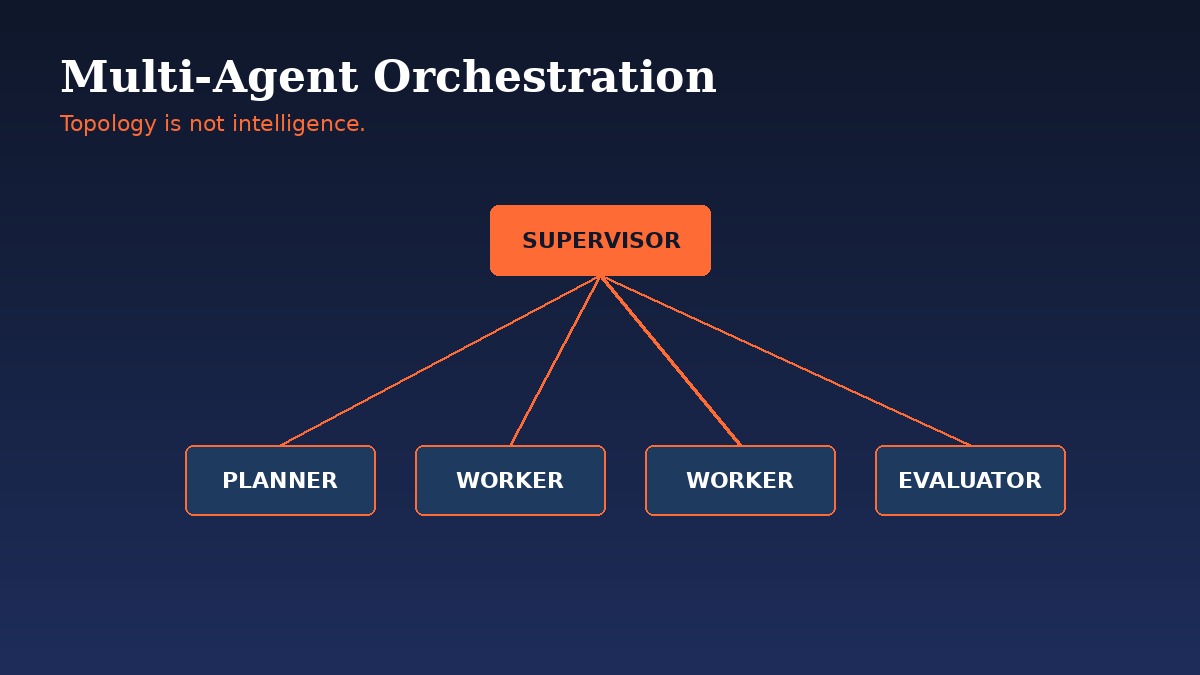

Topology is not intelligence.

The Costume Problem

The dirty secret of most multi-agent demos is that the agents do not actually disagree. They cannot. Same base model, same training data, same instruction-tuning. Five copies of the same brain in different hats produce five rephrasings of the same answer, and the "evaluator" rubber-stamps whatever the "generator" wrote.

Real multi-agent value comes from real differentiation:

- Different models. A reasoning-heavy planner, a fast cheap router, a vision-capable extractor. Mix capabilities, not personalities.

- Different context windows. Workers see only their slice. The supervisor sees the seams. Information boundaries are the architecture.

- Different tools. One agent has database access, another has web search, a third has nothing but a calculator. Capability asymmetry forces real specialization.

- Different incentives. The evaluator is graded on catching errors, not on agreeing with the writer.

Without those differences, you have one agent paying five API bills.

The Three Topologies That Actually Ship

Strip the marketing and the field has converged on three patterns. Everything else is a remix.

Supervisor and workers. A central orchestrator decomposes the task, routes subtasks to specialists, and synthesizes the result. Kore.ai calls it the default for enterprise workflows that need traceability and centralized control. Easy to debug, easy to audit, scales horizontally by adding workers.

Planner, generator, evaluator loop. One agent drafts a plan, another executes it, a third critiques the output, and the loop runs until quality criteria are met. The evaluator-optimizer pattern is the cleanest expression. Works for code, copy, research reports, anything with measurable quality.

Debate and council. Multiple agents argue different positions, a chair synthesizes. Microsoft's group chat orchestration formalizes it for decisions that benefit from adversarial pressure. Slower, more expensive, occasionally brilliant.

Pick one. Master it. Combine later.

The Latency Tax Nobody Budgets For

Hierarchies stack up faster than people expect. Three levels of supervisors with two-second model calls each add six seconds of coordination overhead before any worker starts. Four levels add eight. The user sees a spinner while the org chart deliberates.

The fixes are unglamorous:

- Flatten when you can. Two layers usually beat three. Three usually beat four.

- Route, do not delegate. A fast router model picks the worker directly, skipping the supervisor's reasoning step on simple tasks.

- Fan out the parallelizable. Independent subtasks run concurrently, not sequentially. Latency becomes the slowest worker, not the sum.

- Cache the planner. If the same task class arrives repeatedly, memoize the plan and skip the reasoning.

Every layer of orchestration is a tax on the user's patience. Charge it only when it earns its keep.

Where Multi-Agent Systems Fail

The failure modes repeat with eerie consistency. Anthropic's analysis of more than 200 enterprise agent deployments found that 57 percent of failures originated in orchestration design, not in the individual agents.

The pattern looks like this:

- The passive supervisor. Forwards the request without decomposing it. Becomes a slow proxy.

- The vague evaluator. Says "not good enough" without telling the writer what to change. Loops produce random rewrites.

- Context bleed. Workers see too much of the parent task and start solving for the wrong objective.

- Self-verification collapse. The generator and the evaluator share so much context that the critique becomes endorsement.

- Loop runaway. No stopping criterion, no token budget, the system spirals on hard problems and burns the bill.

None of these are model problems. They are architectural problems wearing model-shaped masks.

The Frameworks Are a Distraction

LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, Anthropic's Agent SDK. They all implement the same primitives: handoffs, tool calls, state, evaluation loops. The framework matters less than the discipline applied inside it.

The teams shipping production multi-agent systems share four habits:

- Explicit routing criteria. The supervisor's prompt names every specialist and the exact conditions for picking each one. Explicit criteria outperform implicit by 31 percent on task completion.

- Hard stopping conditions. Token budgets, iteration caps, confidence thresholds. The loop knows how to die.

- Structured handoffs. Agents pass typed objects, not free-text essays. Pydantic schemas, JSON contracts, anything that prevents prompt drift.

- Eval harnesses per agent. Each specialist gets its own test set. Regressions are caught at the unit level, not after deployment.

When to Stay Single-Agent

The honest answer most architects skip. A lot of tasks do not need multi-agent at all. A single capable model with good tools and a clear prompt outperforms a baroque orchestration scheme on:

- Tasks that fit in one context window

- Tasks with linear dependencies and no branching

- Tasks where speed beats thoroughness

- Tasks where the user will iterate anyway

Multi-agent earns its keep when the problem genuinely splits into independent subproblems, when verification needs an outside eye, or when context windows would otherwise overflow. Everywhere else it is overhead.

Build the Org Chart, Not the Costume Party

The mental model that works is not "team of AI employees." It is "distributed system with LLM nodes." Treat each agent as a service with a contract, an SLA, and a failure mode. Treat the orchestrator as the API gateway. Treat the eval harness as the monitoring stack.

The teams that internalize this ship reliable systems. The teams that keep dressing up one model in five hats keep shipping demos. The difference between the two is architecture.